AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

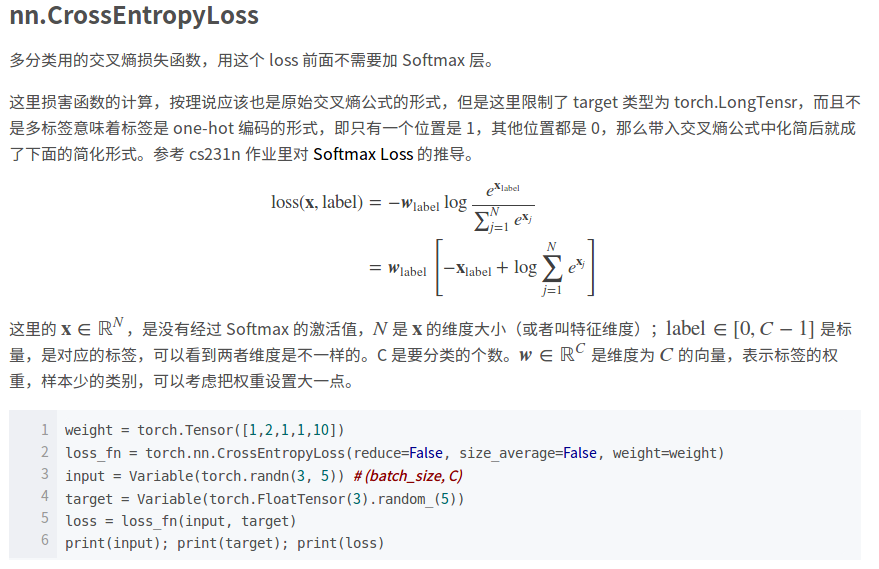

Pytorch cross entropy loss3/16/2024

This bar code supporting: / Code / 3_optimizer / 3_1_lossFunction 1.L1lossĬlass torch.nn.L1Loss (size_average = None, reduce = None) official documents still reduction = 'elementwise_mean' parameters, but the code has been deleted achieve the parametersįunction: Compute the difference between the target and the output of the absolute value, optionally returns a scalar or tensor same dimensions.

Please run ancillary code, the code explained in detail, with manual calculations, which are helpful in understanding the principles of the loss function. However, the loss function value becomes small if representative of the classification model / return accuracy is high? So many kinds of loss function, how to choose? Come to understand seventeen kinds of loss function PyTorch given it. We are talking about optimization, network optimization that is making weight loss function value becomes smaller. Tensor-yu/PyTorch_Tutorial View Imageĭisclaimer: This article is a blogger original article, reproduced, please attach Bowen link! This article interception from "PyTorch model practical training course" to get the full text pdf, please click:

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed